Over the past two years, generative AI has moved from novelty to near-ubiquity across the enterprise. Platforms such as OpenAI, Microsoft Copilot, and Google Gemini have given organisations scalable access to tools that draft documents, generate code, and accelerate creative workflows. The productivity gains have been real, but bounded: these tools respond when prompted, produce output when asked, and stop when the conversation ends.

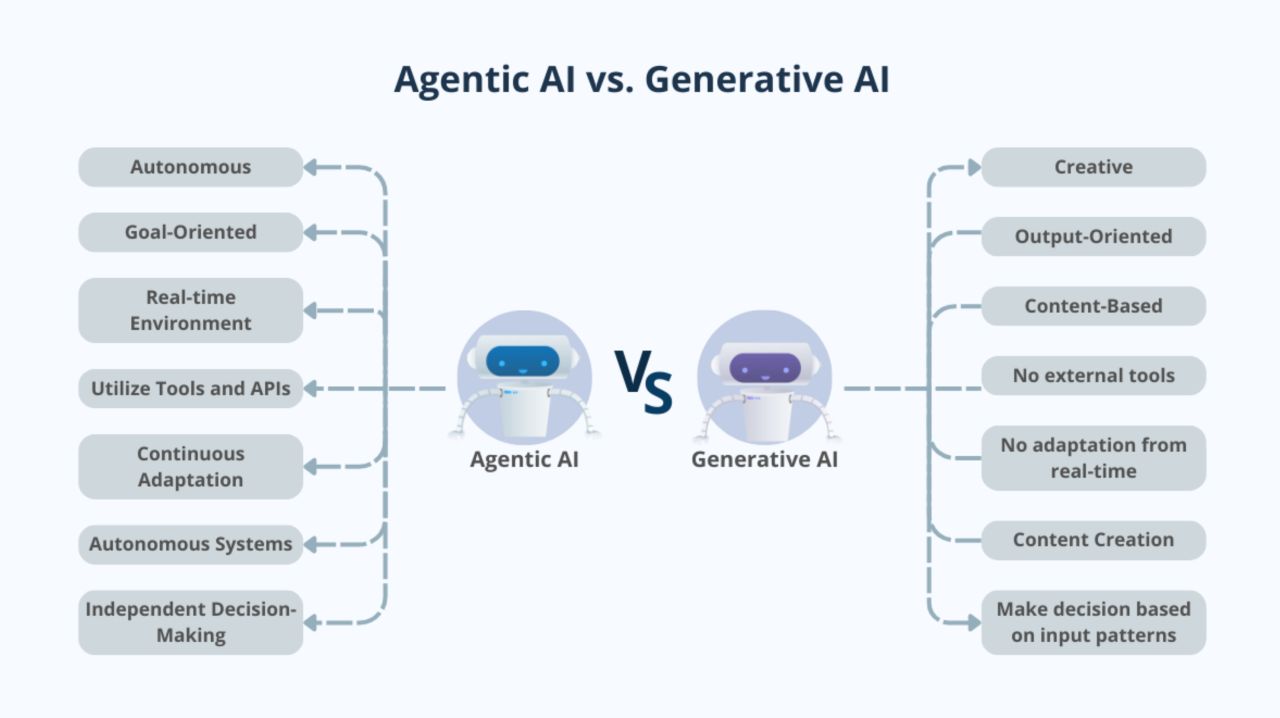

2026 marks a structural shift. The frontier of enterprise AI is no longer about generating better answers to human questions — it is about building systems that plan, decide, execute, and optimise entire workflows with minimal human intervention. This is the move from generative AI to agentic AI.

Agentic AI systems do not wait for prompts. They monitor environments, interpret signals, formulate plans, take action, and learn from outcomes in continuous loops. Where a generative tool might draft a supply chain report when asked, an agentic system detects a supplier disruption, evaluates alternative routes, renegotiates delivery windows, and updates production schedules — before a human operator has finished reading the morning briefing. Organisations that treat this shift as merely a technology upgrade will find themselves outpaced.

At DHJVC, we work with forward-thinking leaders who are already designing AI-native operating models through concrete action across four critical dimensions.

Embedding Governance by Design

The most common mistake organisations make when deploying autonomous systems is treating governance as an afterthought — a compliance layer bolted on once the technology is already running. The leaders getting this right are embedding governance into the architecture of their agentic systems from the outset.

In practice, this means boards are establishing AI oversight committees with clearly defined decision rights and explicit escalation thresholds. A financial services firm, for example, might allow an agentic system to autonomously approve credit decisions below a certain risk threshold, but require human sign-off — with full audit trail — for any decision that exceeds predefined exposure limits. The governance framework specifies not just what the agent can do, but the conditions under which it must stop or escalate.

This approach also addresses the evolving regulatory landscape. The EU AI Act, now in its enforcement phase, requires organisations to demonstrate meaningful human oversight of high-risk AI systems. Governance by design is not just good practice — it is becoming a legal requirement.

Redesigning Processes for Autonomy

Deploying agentic AI into processes designed for human execution is like fitting an autopilot into a car that still requires a hand crank to start. The technology will underperform, and the organisation will conclude — incorrectly — that the technology is not ready.

The organisations seeing genuine returns redesign processes around the capabilities of autonomous systems. Consider a logistics firm that traditionally relied on human dispatchers to plan daily fleet routes. By restructuring operations so that AI agents continuously ingest live traffic, fuel pricing, and demand signals, the firm shifts the human role from routine dispatching to exception management — supervisors intervene only when an agent flags an anomaly it cannot resolve.

This is not automation in the traditional sense. Traditional automation follows rigid rules; agentic systems adapt, re-plan when conditions change, and progressively handle a wider range of scenarios without human input. The design challenge is defining the right boundary between agent autonomy and human oversight — a boundary that should evolve as the system demonstrates reliability.

Building Secure Data Architecture

Agentic AI is only as capable as the data it can access, and most enterprises still operate with fragmented, siloed data estates never designed for real-time autonomous consumption. A manufacturer may run separate systems for ERP, IoT telemetry, supply chain management, and maintenance scheduling — each with its own data model and update cadence. An agent tasked with predicting equipment failure cannot function if it must query four disconnected systems with inconsistent timestamps.

The foundational work is consolidating these silos into a unified, real-time data layer with consistent governance, clear lineage, and robust access controls. When this foundation is in place, agents can correlate vibration data from a factory floor sensor with procurement lead times to autonomously order replacement parts before a failure occurs — eliminating unplanned downtime rather than merely reducing response time.

The data architecture must also be secure by design. Agentic systems operating across multiple enterprise platforms represent a significant attack surface, making encryption, zero-trust access models, and continuous behavioural monitoring prerequisites for deployment at scale.

Aligning Leadership and Risk Strategy

Perhaps the most underestimated dimension of this transition is the alignment required at the leadership level. Too often, AI strategy sits exclusively within the technology function, disconnected from the operational and financial leadership that determines whether autonomous systems create or destroy value.

The organisations making the most progress are those where CFOs and COOs jointly define AI performance KPIs tied to operational resilience — not just cost efficiency. Reducing headcount is a narrow measure of AI success. More meaningful indicators include time-to-decision compression, exception rates in autonomous processes, and the speed at which the organisation adapts to disruptions.

This cross-functional alignment also surfaces risks that a purely technical assessment would miss. When a COO understands how an agentic system makes routing decisions, and a CFO understands the financial exposure if that system errs at scale, the organisation can design risk strategies that are genuinely proportionate.

From Experimentation to Systemic Integration

The question facing enterprise leaders in 2026 is no longer "How do we use AI tools?" It is "How do we architect organisations where AI can act proactively and responsibly?" The first question leads to pilot projects and marginal productivity gains. The second leads to a rethinking of how work is structured, how decisions are made, and how value is created.

The organisations that will define the next era of competitive advantage are those moving from experimentation to systemic integration — embedding agentic capabilities into their core operating models with the governance, data infrastructure, process design, and leadership alignment to support them. This is not a technology project. It is an organisational transformation.

At DHJVC, we have spent over a decade helping organisations navigate structural shifts — from cloud migration to digital operating model design. If your organisation is preparing for the move to agentic AI, we would welcome the opportunity to explore how we can help you design, govern, and scale your AI-native operating model.